Steve Spangler Science

Most Popular Experiments

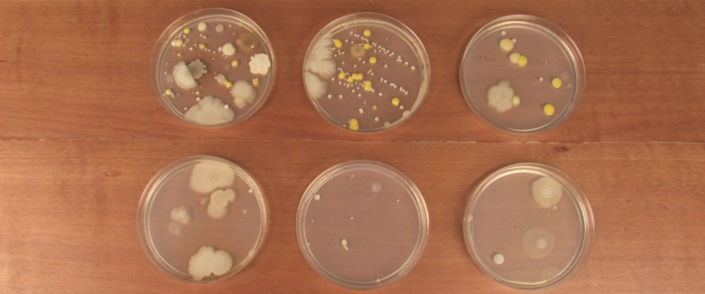

Free Experiments for Kids Resources

Our website is the easiest to search resource for science experiments for children that can be performed without having to buy expensive materials.

What’s Trending

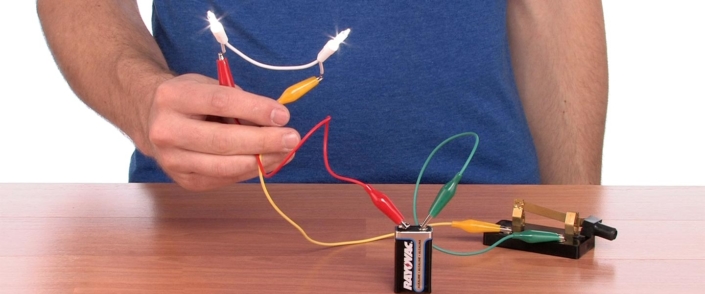

CHECK OUT OUR BEST SELLERS!

For more than 25 years Steve Spangler Science has been bringing FUN to science, with these popular items!